Monadic testing lets respondents review individual concepts one-by-one. By focusing participants' attention on one stimulus at a time, it delivers actionable deep-dive results for product and pricing decisions.

Monadic Test

Ask respondents to evaluate product concepts and digital assets one-by-one to get a read of their preferences and perceptions with various question types.

Monadic testing is a type of survey research that introduces survey respondents to individual concepts in isolation. It is usually used in studies where independent findings for each stimulus are required, unlike in comparison testing, where several stimuli are tested side-by-side.

It is called monadic (from Greek μοναδικός, or “single”) because each product or concept is displayed and evaluated separately, one at a time.

How to set up monadic testing on Conjointly

The Monadic Block is now available within every automated tool on the Conjointly platform and can easily be added to any study. Choose it from the Additional questions list.

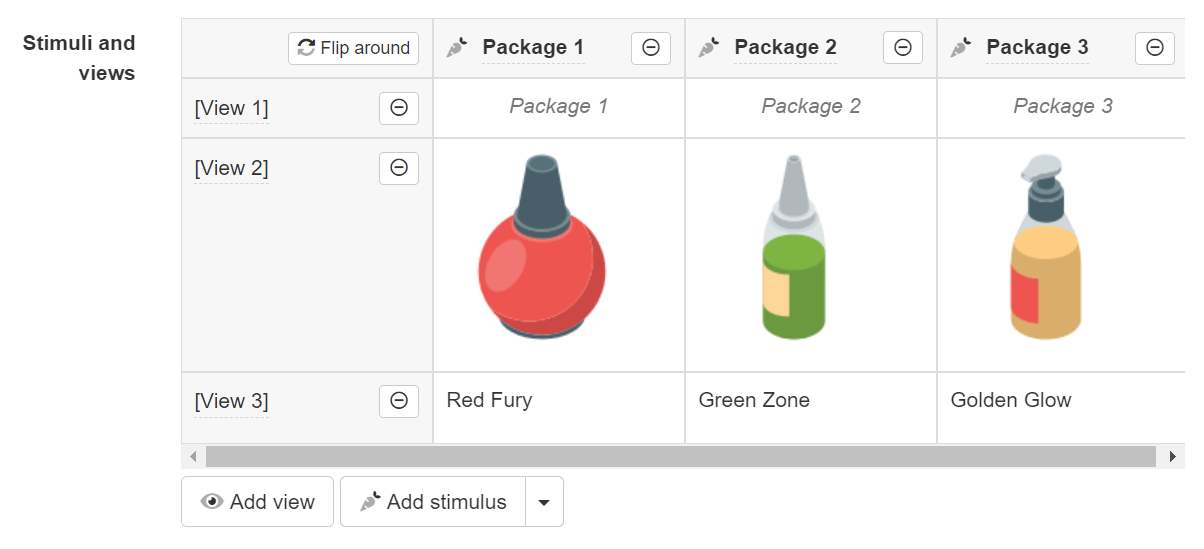

To get started with the set up, create a list of stimuli. You can Import a text list or use variables from your experiment, such as attributes and levels. You can also manually add and modify each stimulus individually.

Views allow you to insert different representations of the concept in the diagnostics question. You can add images, long descriptions, use fancy formatting and lots more.

You will see all your stimuli displayed as columns by default. If you have a long list of concepts and only a few views, you can flip around the table to display stimulus as rows instead of columns.

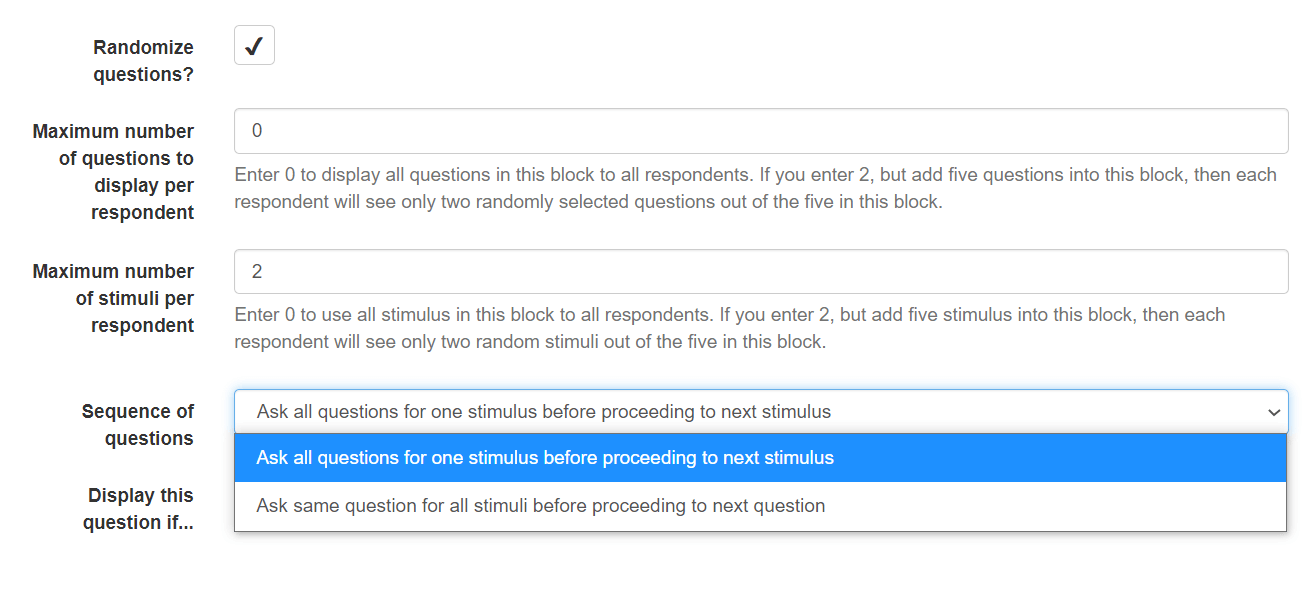

After completing the table of stimuli, you can continue customising the Monadic block. You are given complete control over the type of monadic testing your experiment will run.

Modify the following parameters to change the flow of the survey*:

Randomise questions allows the system to vary the order of the questions inside the monadic block in order to reduce respondent bias.

Maximum number of questions to display per respondent lets you control the survey length by limiting the number of questions for each respondent.

Maximum number of stimuli per respondent helps you get more detailed feedback for each concept without introducing survey fatigue.

Sequence of questions lets you choose between sequential testing and random monadic.

You can also include diagnostic questions in the block by adding a new question or use existing questions from your study. Simply drag and drop any of the additional question types into Monadic block.

Preview the survey as a participant to test your setup and prepare to launch.

*You will notice estimated sample size automatically calculated to reflect the needs for the chosen test.

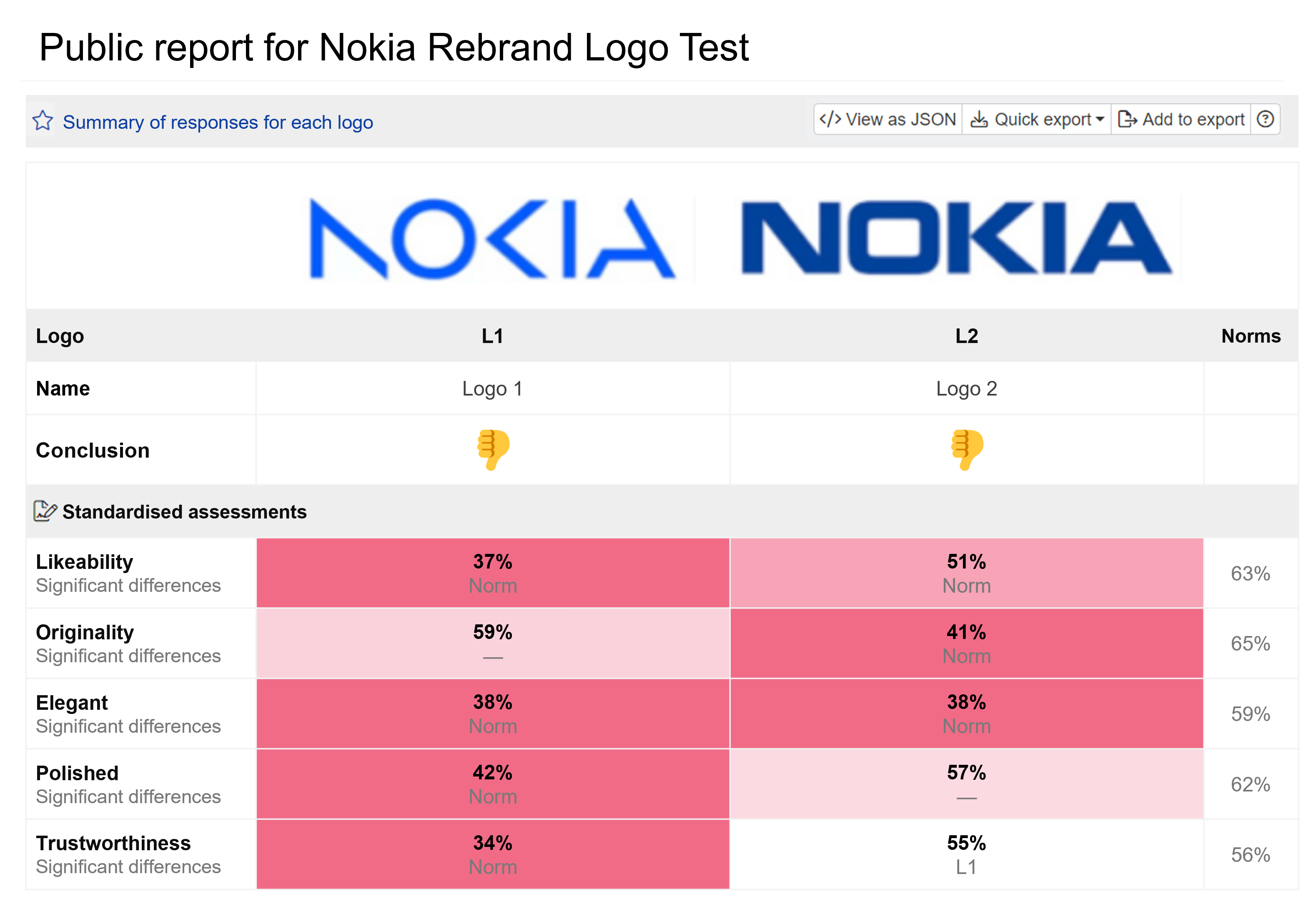

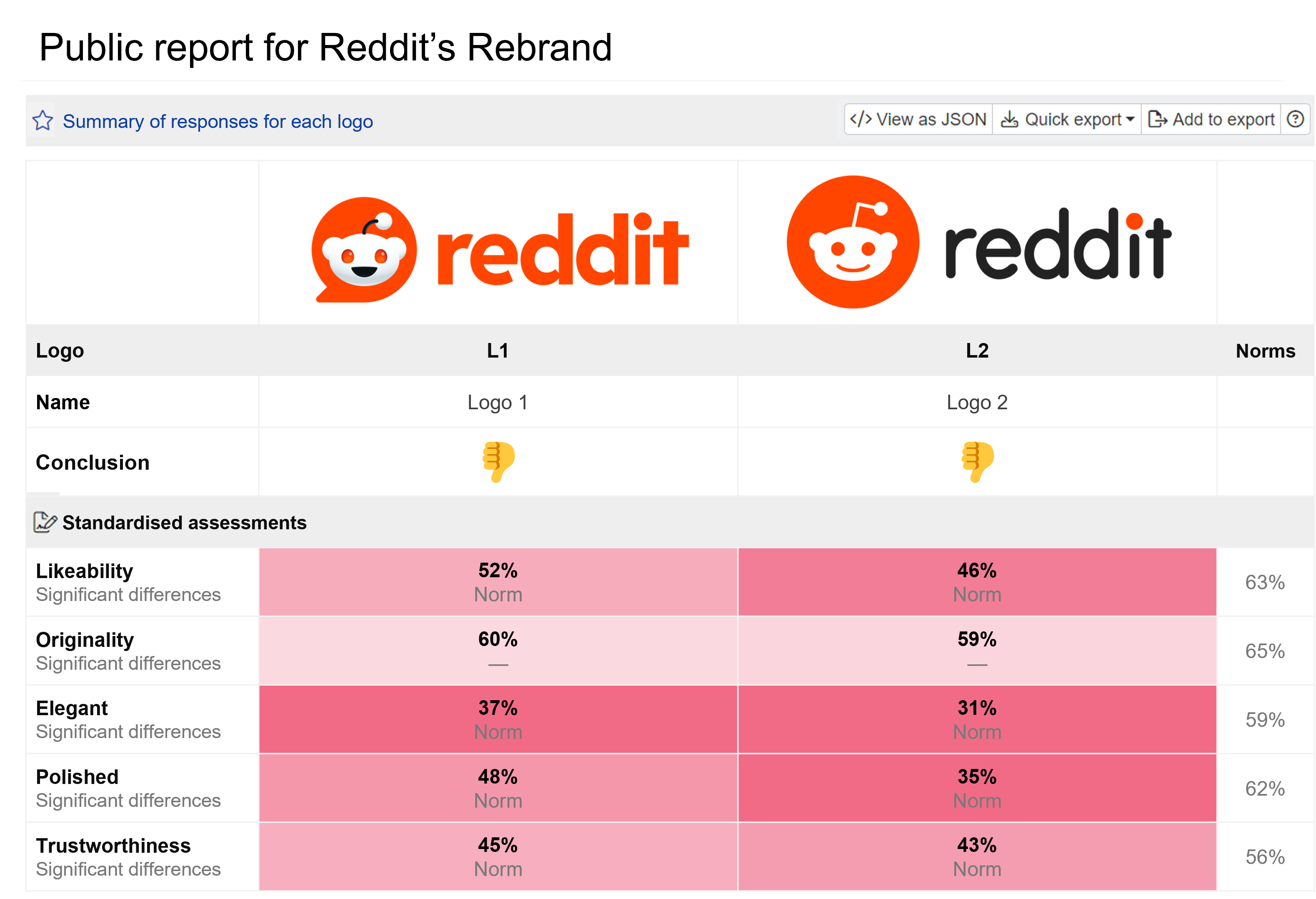

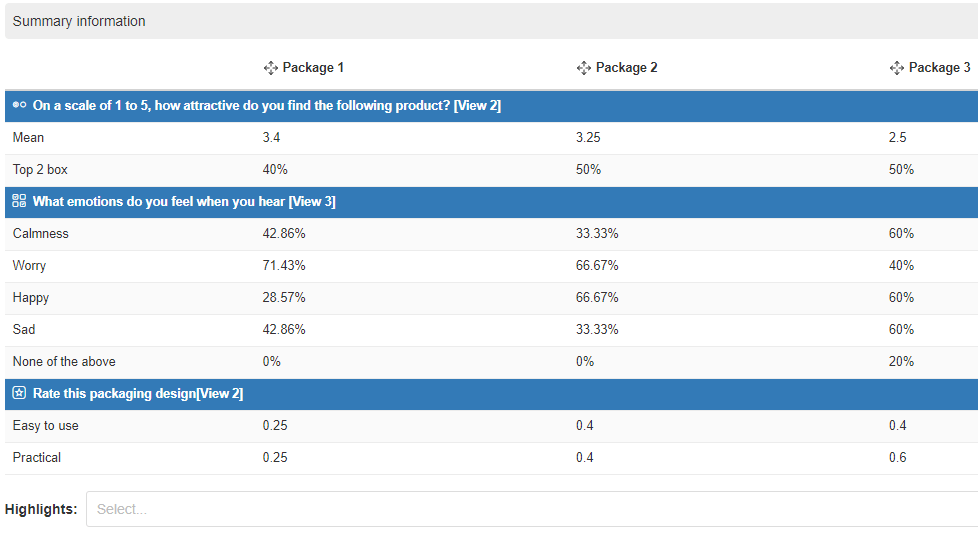

Reports for monadic testing on Conjointly

Get all your insights in one place, organised by questions and stimuli. The reports for monadic tests provide an isolated feedback for each concept and also allow to compare parameters between concepts.

You will also get the ability to highlight an individual concept and deep-dive into the stats. That’s the main perk of concept testing overall.

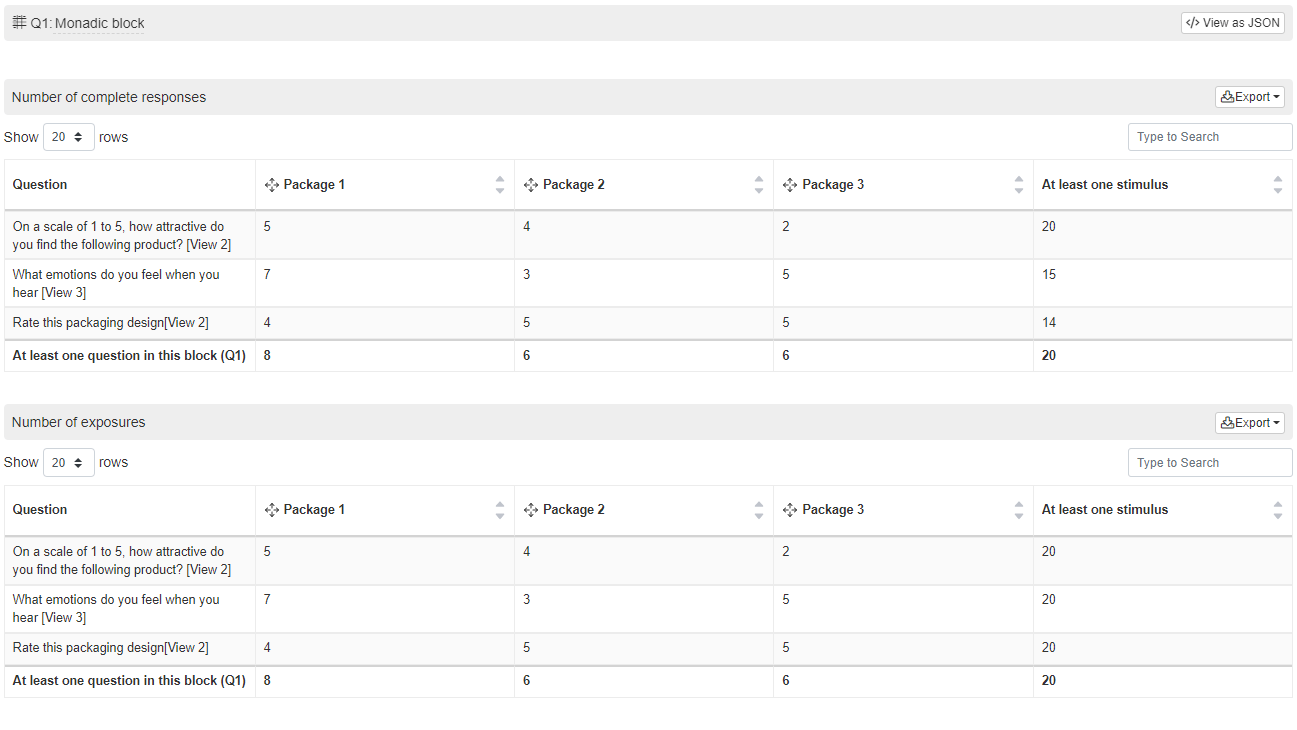

Depending on your chosen method of monadic testing, some concepts and questions will not be shown to each respondent. So it also important to have the ability to review the number of exposures and complete responses for each question and stimulus.

Types of monadic tests

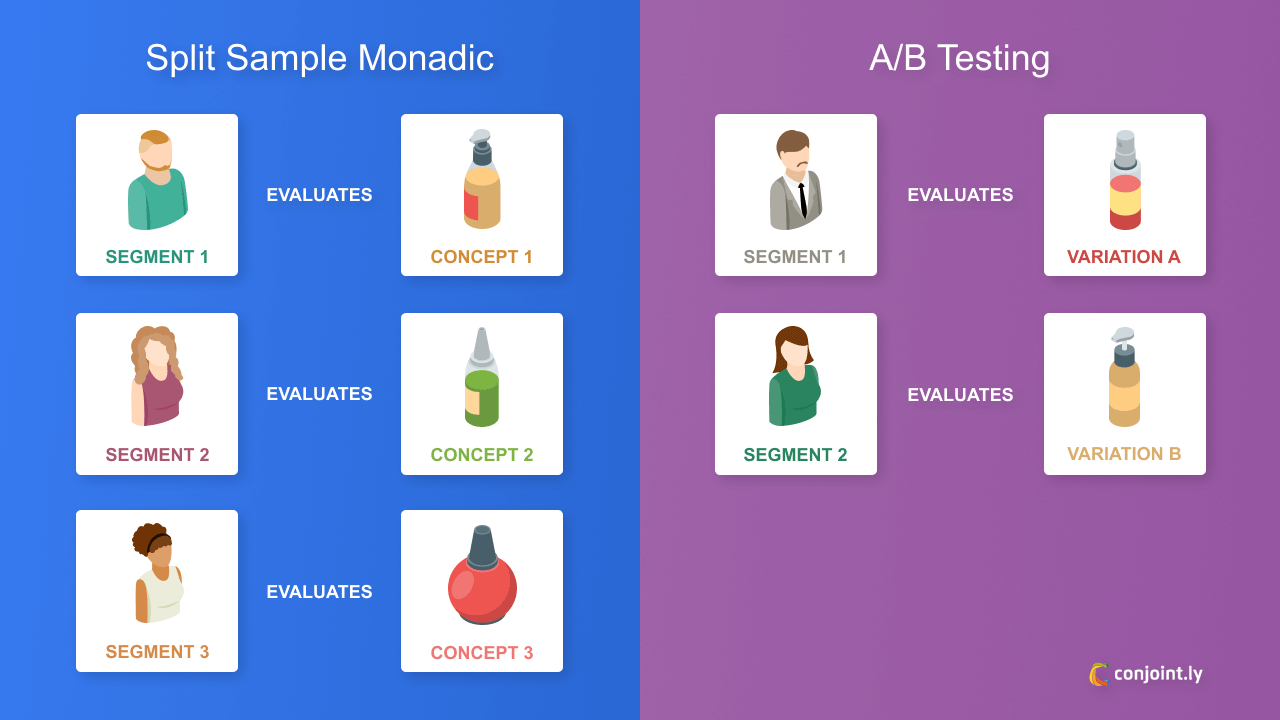

Split-cell monadic

Split-cell monadic testing is a form of monadic testing where each respondent is shown a single concept, followed by a series of questions which ask the respondent to evaluate this concept. As each respondent only evaluates one concept, each additional concept that is added to a split-cell monadic test requires an additional sample of respondent.

Characteristics of split-cell monadic testing:

Larger sample size: Each concept in a split-cell test requires a separate audience.

More detail: By limiting respondents to one concept per test, respondents can be asked more questions in a split-cell test without risking fatigue.

Higher completion rate: The shorter questionnaire style used in split-cell testing encourages a higher completion rate than other methods.

Less bias: Showing concepts in isolation removes the influence from other concepts, reducing order bias.

A/B testing or split testing

A/B testing is a type of monadic testing used to compare two versions of a marketing publication, such as a web page or email, to inform which styles/formats work best. Audiences are shown a “champion” (a previously used format which is expected to perform well) against a “challenger” (a variation of the challenger). The winning variation is typically the version with the highest conversion rate.

It is important to note that respondents cannot compare variations in an A/B test, as each option is only shown to one set of respondents. Businesses should source samples with this in mind to ensure the audience profile of each testing cell aligns with the other. Otherwise, results could simply reflect different audiences having different preferences.

Characteristics of A/B testing:

Hypothesis-based: A/B testing requires a clear hypothesis to be effective, e.g. that a short-form blog article will perform better than long-form. Without specific expectations, a business cannot determine the outcome of an A/B test and the results will not be as useful for future decision-making.

Limited scope: Businesses should only test one variation at a time in an A/B test, otherwise they will not be able to determine which specific variation affected respondents’ preferences. After individual testing, several winning variations can be tested together in combination against an individual variation.

Long-term pay-off: The long-term return-on-investment of a well-executed A/B test can be measured quite clearly. One test can prevent a series of failed campaigns by detecting a pain point or vital inclusion early on, and save time, money, and resources spent developing content that has no performance expectations.

Strict sampling: Sample creation should be done carefully in A/B testing as the accuracy of results relies upon both variants having the same sample. If testing involves unmatching samples, the results will be inconclusive.

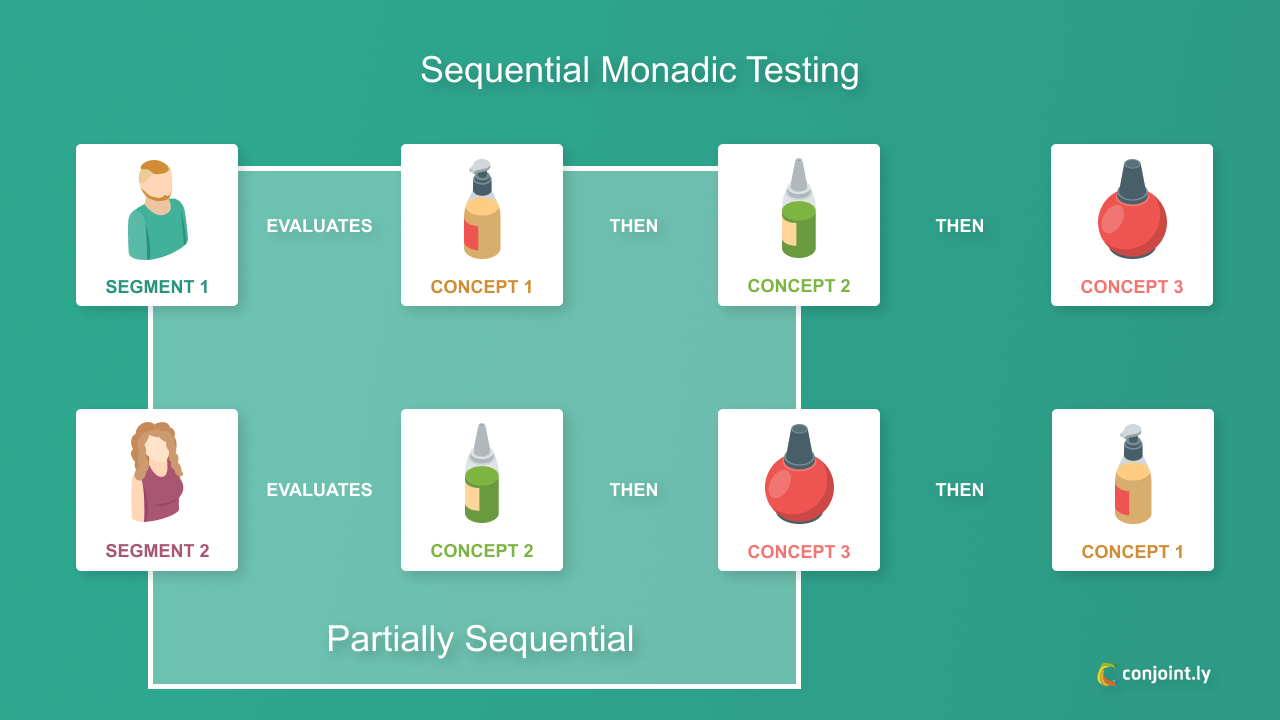

Sequential monadic

Sequential monadic testing is a variation of monadic testing where each respondent either all potential concepts or a limited number of concepts, followed by the same evaluation questions for each. It differs from monadic testing in that whilst only one stimuli is shown at a time, an alternative design is also shown. The sample size for sequential monadic tests can be much smaller as individual respondents can provide answers for multiple concepts.

It is an ideal testing method for finding out why respondents prefer one product over another, without distraction from the other options. Gabor-Granger is a type of sequential monadic testing.

Characteristics of sequential monadic testing:

Smaller sample size: As respondents can be shown several concepts per test rather than a single concept, sequential monadic tests can use a smaller sample size than a basic monadic test.

Lower completion rate: When several concepts are shown, the questionnaire becomes lengthier which can affect completion rate as well as quality of answers.

Potential bias: Respondents’ answers may be influenced by the other concepts they have been shown.

Cost effective: The smaller sample size required makes sequential monadic a more cost effective option than other forms of monadic.

Discrete choice as an alternative technique

Discrete choice analysis involves examining datasets that contain choices made by people from among several alternatives. It is usually used to understand what drove people to make these choices. In a discrete choice test, respondents are shown several product concepts (choice sets) which are broken down into individual components (attributes and levels). Respondents are then tasked with choosing which option they prefer. Through analysis of these responses, businesses can determine the most important and preferred features among respondents.

For example, through discrete choice analysis, a business could determine how weather affects consumers’ decision to eat out, order food delivery, or cook at home. Choice-based conjoint is a type of discrete choice analysis.

Characteristics of discrete choice analysis:

Real-life scenarios: Consumers’ decisions are usually based on several factors with varying impact on preference, Discrete choice analysis reflects this reality by breaking products down into features.

Shorter questionnaire: If the recommended length is followed (8-12 questions), discrete choice usually takes less time to complete than other experiment types.

Varied sample size: Sample size for a discrete choice experiment depends upon the number of choice sets included and the complexity of the study.

Potential fatigue: Businesses should remain conscious of the number of choice sets and features respondents are shown, as too many can not only complicate the study but confuse respondents and cause fatigue.

Sample sizes required

Below are the formulae for required sample size depending on the type of monadic test.

| Type of monadic | Sample size (N) |

|---|---|

| A/B test | (Number of respondents per stimulus) × 2 |

| Split sample | (Number of respondents per stimulus) × (Total number of stimuli in the test) |

| Partially sequential | (Number of respondents per stimulus) × (Total number of stimuli in the test) / (Number of stimuli per respondent) |

| Sequential | (Number of respondents per stimulus) |

For example, if you:

need 200 responses for each stimulus (which is usually enough if you have a series of Likert scale questions),

have 20 stimuli to test,

show only 7 stimuli per participant,

you will need 200×20/7 ≈ 570 respondents for your test.

Best use and examples of monadic testing

Monadic concept testing

Monadic concept tests provide clear, detailed feedback about products as they allow respondents to focus exclusively on a product idea.

By gaining insights on a single product at a time, this testing method draws out in-depth findings about consumers’ broader opinions surrounding it, such as brand acceptance, and preference for competition.

Monadic price tests

Monadic price tests task respondents with an individual product at a single price, asking questions about acceptance, intent, or other pricing-related topics. They provide robust results for price sensitivity as only one price is shown for each product at a time, eliminating influence from other pricing options.

Splitting the total sample into separate sample cells allows the study to gauge respondents’ attitudes to different price points. Each choice is analysed cell-by-cell to provide an estimate of demand. It should be noted that:

- Monadic tests do not usually consider competitor pricing, which can have a significant effect on research outcomes.

- They also require quite large sample sizes, making them a more costly option when compared to discrete choice experimentation aka conjoint analysis.

Advantages of monadic testing in survey design

There are many advantages of using monadic testing in survey design, including:

User-friendliness

Monadic tests are shorter in length as they only task the respondent with one stimuli at a time, lessening repetition and respondent fatigue.

Ability to deep-dive

Respondents focus their attention on a specific concept for a few minutes, so you can ask them various questions about that concept.

Conclusion

Monadic testing is advantageous when isolated feedback for product concepts or pricing is desired. Its benefits include realistic design, reduced bias, user friendliness, and accurate results. Monadic tests should be carefully considered as a viable option for pricing and concept research as it is costlier than other survey designs due to the large sample size required.